Why is My Minecraft Server Overloaded? Quick Troubleshooting Guide

Urgent, practical troubleshooting for why is my minecraft server overloaded: diagnose load, tune RAM, optimize plugins, and adjust hosting for stable TPS.

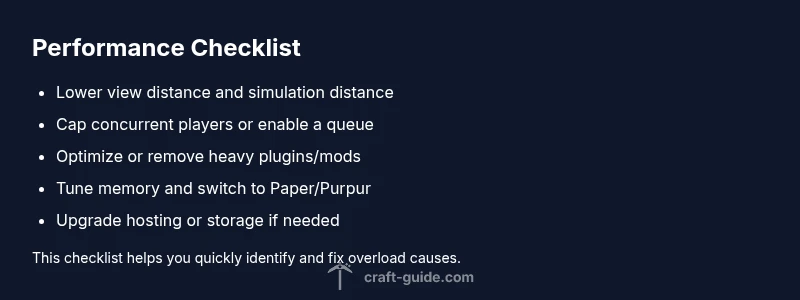

Why is my minecraft server overloaded? Overloads usually come from too many players, heavy plugins, or insufficient RAM. Start by lowering view distance, capping concurrent players, and ensuring proper memory allocation. If issues persist, upgrade hosting or optimize plugins to reduce resource use.

Why Your Minecraft Server Is Overloaded

Why is my minecraft server overloaded? When demand exceeds available resources, lag, rubber-banding, and delayed actions appear. According to Craft Guide, overload commonly happens when the number of players or the impact of plugins pushes a server beyond its configured resources. In practice you’ll see TPS dips, long tick times, and slower command execution as the JVM struggles to keep up. This is not just a hardware issue; misconfigurations amplify the problem. If you’re asking about the root cause, the short answer is resource pressure from concurrent users, plugins, and I/O activity. The Craft Guide team found that stability often hinges on aligning hardware headroom with carefully tuned software settings. In 2026, many overloads are preventable with proactive tuning, staged testing, and sensible limits. By understanding the interaction between players, plugins, and hardware, you can implement targeted fixes that restore smooth gameplay.

Common Causes of Overload

- Excessive player count: More players increase tick workload and server-side processing. The more entities and players, the harder the server must simulate world state.

- Heavy plugins or mods: Spigot/Paper plugins performing heavy tasks every tick or during events can spike CPU usage.

- Improper memory allocation: Java heap either too small (causing GC thrashes) or too large (leading to GC pauses).

- Large view distance and simulation distance: Higher render/tick demands for clients increases server computations.

- Disk I/O and network bottlenecks: Slow storage or limited bandwidth can throttle world generation and data transfer.

- World size and generative settings: Overworld size, chunk loading, and backups can spike I/O.

- Automatic backups and world editing during peak times: Heavy tasks block server threads.

Quick Fixes You Can Apply Right Now

- Lower view distance to 6-8 chunks and reduce simulation distance. This dramatically reduces chunk loading work for all players. Tip: adjust in server.properties and restart.

- Cap concurrent players or enable a simple whitelist during peak times. A small, predictable user base helps maintain TPS.

- Allocate memory wisely: set -Xms and -Xmx to sensible values (e.g., 2-4 GB for small servers, more for bigger). Avoid over-allocating which causes GC pauses.

- Disable or temporarily remove resource-heavy plugins or mods. Re-enable one by one to find culprits. Tip: use Paper’s timings report to identify heavy plugins.

- Optimize server properties: enable aggressive GC and reduce entity tracking if appropriate. Tip: profile performance in a test environment before applying live.

- Restart to clear memory pressure and verify improvements. If not improved, proceed to diagnostic steps.

Diagnostic Checklist to Narrow Down the Problem

- Check TPS: A sustained TPS below 19 indicates overload. Watch timings and server CPU usage over the last 30 minutes.

- Review memory usage: Is the heap consistently near max? If so, adjust -Xmx/-Xms or add RAM.

- Inspect plugins/mods: Identify any with high CPU time in timings or logs.

- Analyze disk I/O: Are there disk-heavy operations during peak times? Consider faster storage for world data.

- Examine network metrics: Packet loss or high latency can worsen perceived lag.

- Run a controlled test: Temporarily reduce players or disable plugins to see if TPS recovers.

- Document findings: Keep a simple log of changes and outcomes to guide decisions.

- When to escalate: If you cannot safely adjust RAM or hardware, consider professional hosting support.

Long-Term Solutions for Stable Performance

- Move to a performance-optimized server software: Paper or Purpur provides better timing and plugin management than plain Spigot. Update and tune timings.

- Fine-tune JVM flags for your hardware: Use garbage collector options suitable for server load (e.g., G1GC). Align heap size with available RAM and OS headroom.

- Upgrade hardware or hosting: If you regularly hit peak capacity, consider more RAM, faster CPU, or dedicated hosting. For small to medium servers, consider a premium hosting plan or moving to a more capable data center.

- Prune plugins and entities: Remove deprecated plugins, keep only essential mods, and adjust mob cap and entity counts to avoid runaway spawns.

- Maintain regular maintenance windows: Schedule idle hours to run backups and world edits so they don’t interfere with peak play.

- Monitor continuously: Deploy a lightweight monitoring tool to track TPS, memory, CPU, and I/O.

Safety, Backups, and Best Practices

- Always back up the world and configs before making major changes. Store backups offsite if possible.

- Test changes in a staging environment when possible to avoid live outages.

- Document every change so you can rollback if needed.

- Communicate with players: Let them know when to expect performance windows.

- Practice safe power and cooling: Ensure the host hardware remains within temperature ranges to avoid throttling.

Steps

Estimated time: 45-90 minutes

- 1

Back up the current world and configs

Create a full backup of world data, player files, plugins, and server.properties. Verify backup integrity before making any changes.

Tip: Store backups offsite or in cloud storage to prevent data loss. - 2

Check desktop TPS and memory usage

Run timings or a monitoring plugin to capture TPS trends and heap usage over at least 30 minutes. Note any sustained drops.

Tip: Take a baseline snapshot to compare after changes. - 3

Tune memory allocation

Adjust -Xms and -Xmx to safe, proportional values based on RAM available. Ensure -Xms <= -Xmx.

Tip: Avoid over-allocating RAM, which can cause GC pauses. - 4

Apply quick config tweaks

Lower view distance, enable whitelist if needed, and disable non-essential plugins temporarily.

Tip: Make one change at a time and test impact. - 5

Test and monitor impact

Restart server and monitor TPS, CPU, and memory for 20-30 minutes. Compare with baseline.

Tip: Document results for future reference. - 6

Plan long-term upgrades

If TPS remains low, prepare hardware upgrades or switch to a performance-focused host. Consider staging tests first.

Tip: Use a staging environment to validate changes before production.

Diagnosis: Machine lag with TPS drops during peak play on a Minecraft server.

Possible Causes

- highInsufficient RAM or misconfigured heap size

- highToo many concurrent players

- mediumResource-heavy plugins/mods

- mediumDisk I/O bottlenecks

- lowNetwork latency/bandwidth constraints

Fixes

- easyIncrease available RAM and adjust Java heap (-Xms/-Xmx) to balanced values

- easyReduce view distance and cap concurrent players

- mediumIdentify and remove heavy plugins/mods, or replace with lighter alternatives

- hardUpgrade storage or move to faster hosting; optimize server software

- mediumTune JVM flags and switch to a performance-focused server (Paper/ Purpur)

People Also Ask

What is TPS and why does it matter for overload?

TPS stands for ticks per second and measures server health. A healthy server runs near 20 TPS; lower values indicate lag and poor responsiveness.

TPS is the server's heartbeat. If it drops, gameplay will lag and actions will lag behind.

Can I fix overload without upgrading hardware?

Yes. Optimize plugins, reduce load, adjust memory, and fine-tune server settings. If performance remains poor, hardware upgrades may be required.

Sometimes optimization is enough, but persistent lag often means hardware needs an upgrade.

Is upgrading RAM always the best solution?

RAM often helps, but it isn’t always sufficient. First identify bottlenecks (CPU, plugins, I/O) before investing in memory.

RAM helps, but it’s not a guaranteed fix if other components bottleneck performance.

Should I switch to Paper or Purpur for better performance?

Yes. Paper and Purpur optimize timings and plugin handling, often delivering better and more stable performance than vanilla Spigot.

Paper is usually the recommended starting point for performance gains.

How can I tell if a plugin is causing lag?

Use server timings to identify heavy CPU users. Disable plugins one at a time to confirm their impact and re-test.

Timings show you which plugin hogs CPU; try disabling the suspects first.

When should I seek professional hosting help?

If you lack time, expertise, or hardware headroom, consider professional hosting support or a more powerful plan.

If optimization and upgrades don’t help, get hosting support.

Watch Video

The Essentials

- Identify top load contributors and address them first

- Balance RAM with CPU and disk I/O for stable TPS

- Test changes in a staging environment before live deployment

- Monitor TPS, memory, and CPU after each change to verify impact